Over the past year, Silvia Cambié and I have been working together and thinking deeply about what we call human-centred AI. It’s an idea that has increasingly shaped how we approach the subject of artificial intelligence – not from the perspective of technology first, but from the perspective of people.

What does AI mean for human judgement? For accountability? For the values organisations claim to uphold?

Such questions have been at the heart of the work we’ve been doing with the International Association of Business Communicators (IABC), particularly through the AI Leadership & Communication Shared Interest Group we founded in December. They were also central to a webinar we hosted for IABC last month on ethics and artificial intelligence.

The core idea is straightforward. When organisations adopt AI, the starting point should not be the technology itself but its human consequences. What problems are we trying to solve? How do decisions remain accountable? And how do we ensure that human judgement and responsibility remain central?

Which is why a news report I read this week felt particularly striking.

From ethical frameworks to the realities of war

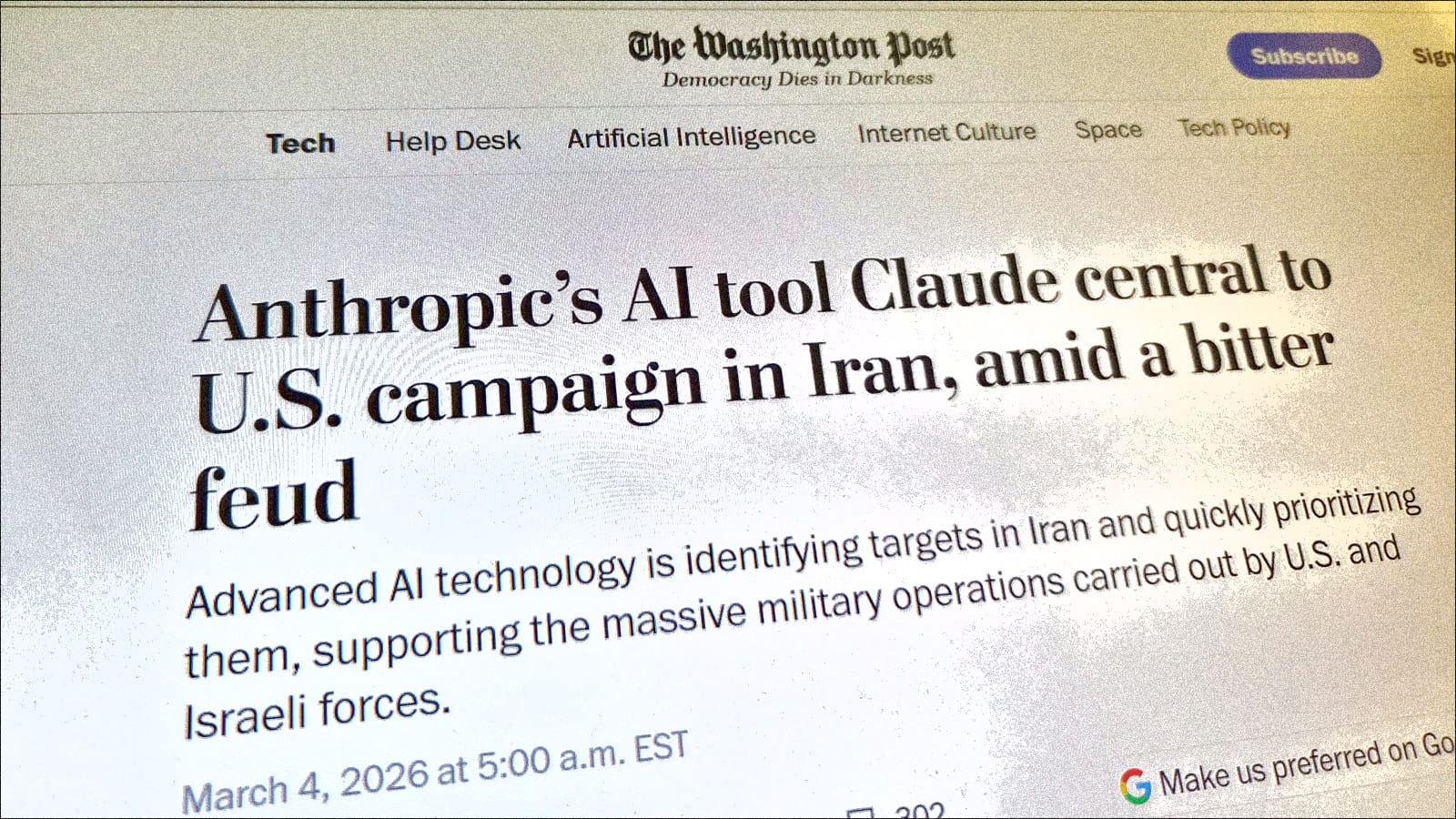

According to reporting in The Washington Post on 4 March, the U.S. military has been using advanced artificial intelligence systems as part of its operational planning in the current conflict involving Iran. The Pentagon’s Maven intelligence platform – developed by Palantir – is analysing large volumes of intelligence data and helping generate prioritised target lists. Embedded within that system is Claude, the AI model created by Anthropic.

The article describes how AI helps process intelligence from multiple sources and suggests possible targets more quickly than human analysts could manage alone.

To be clear, humans are still responsible for operational decisions. But the technology is increasingly involved in analysing information and shaping the options being considered.

For years, debates about AI in warfare focused on future scenarios – SkyNet-like autonomous weapons systems or machines making battlefield decisions independently. What we appear to be seeing now is something more immediate: artificial intelligence becoming part of the analytical infrastructure of military operations.

"It's dark news for the future of ethical AI," wrote The Conversation a few days ago.

In the technology sector we often hear about responsible AI, ethical AI and human-centred AI. Many companies – including the developers of these models – emphasise the importance of aligning AI with human values and maintaining meaningful human oversight.

Those principles matter. They are part of the reason conversations about AI ethics have become so prominent over the past year.

But warfare exists in a very different environment.

Governments have always pursued technological advantage when national security is at stake. From radar to satellites to cyber capabilities, technologies that offer strategic benefits are rapidly incorporated into military systems once they prove useful.

Seen through that lens, the use of AI in military intelligence and planning may not be surprising. If a technology helps analyse complex information faster or more effectively, the incentive to adopt it is powerful.

Still, the ethical tension remains difficult to ignore.

Experts quoted in the Washington Post note that AI systems can make mistakes and that human verification is essential when decisions involve life and death.

Even so, the growing role of AI in analysing intelligence and prioritising information raises questions about how human judgement interacts with machine-generated insight.

Where the wisdom of the heart fits

Last summer, in a conversation for the For Immediate Release podcast that I co-host with Shel Holtz, the three of us – Silvia, Shel and me – spoke with Monsignor Paul Tighe of the Vatican about artificial intelligence and ethics. During that discussion, he used a phrase that has stayed with me: “the wisdom of the heart.”

His point was that as technologies become more powerful, the real challenge is not simply technical capability. It is ensuring that human judgement, reflection and moral responsibility remain at the centre of how those technologies are used.

That idea feels particularly relevant when artificial intelligence begins to shape decisions in environments where the stakes could not be higher.

None of this means governments will stop using artificial intelligence in conflict. History suggests quite the opposite. Once technologies prove strategically valuable, they rarely remain outside military systems for long.

But the story still leaves an important question hanging in the air.

It becomes far more complicated when the same technologies are used in war.

I've long argued that human-centred AI isn't a slogan – it's a choice. So if artificial intelligence is meant to serve humanity, what does that promise mean when the same technology helps plan and conduct conflict?

Perhaps that is where the real test of human-centred AI begins.