A story in The Guardian caught my attention this week – one that is uncomfortably familiar.

A freelance book reviewer, writing for The New York Times, submitted a review that included passages strikingly similar to an earlier review of the same book in The Guardian. The similarity wasn’t spotted by an editor. It wasn’t caught in review. It was a reader who noticed the overlap.

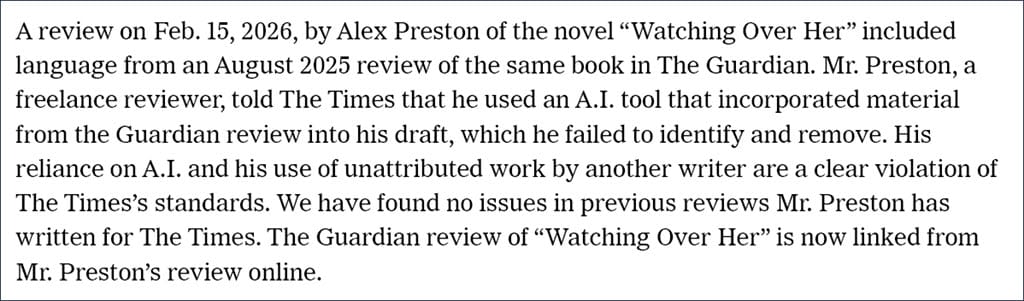

What followed was swift and public. The Times investigated. The reviewer, Alex Preston, acknowledged that he had used an AI tool to assist his writing and had failed to detect that it had incorporated material from another source.

The paper severed ties with him. An editor’s note was added, stating plainly that the use of unattributed material and reliance on AI in this way breached its standards.

It’s a stark example. But it’s not an isolated one.

This is not new

When I read this, I was reminded immediately of another case I wrote about last December.

In that instance, a Deloitte team presented a research report to a client, the government of Australia, that included AI-generated hallucinations. Not stylistic overlap this time, but factual invention. The issue differed in detail but was identical in nature.

AI output had made its way into the final work without being checked at all.

The problem is not that AI was used – it’s that its output was trusted without question, or treated as final.

Verification is not optional

This brings me back to a principle I’ve been talking about for some time – if AI touched it, you must verify it.

That word – verify – matters more than ever.

It does not mean a quick read-through. It does not mean checking for typos or smoothing the tone. It means taking full responsibility for the content as if you had written every word yourself.

In practice, that means asking:

- Is this factually correct?

- Is this genuinely original?

- Is every link accurate and working?

- Does this meet professional and ethical standards?

- Does this reflect what I intended to say – or what the model inferred?

Verification is not proofreading. It’s accountability. And when that step is missed, there can be severe consequences.

AI output often reads well – fluent, confident, convincing. And when something reads well, we’re more inclined to trust it.

That’s where the discipline slips.

But responsibility doesn’t blur. If your name is on the work, it is yours. AI can assist with the work; it can even improve it. But it cannot take responsibility for it.

That part hasn’t changed.

Sources:

- Editors’ Note: April 1, 2026 (The New York Times, 1 April 2026)

- The New York Times drops freelance journalist who used AI to write book review (The Guardian, 31 March 2026)

- If AI Touched It, You Must Verify It (11 December 2025)